Anthropic has rolled out Claude Opus 4.6, a significant upgrade to its most capable AI model, and the message is clear: this is not about flashy demos—it’s about sustained, professional-grade work. The new release sharpens Claude’s ability to plan, reason, and operate autonomously across long, complex tasks, particularly in software development and knowledge-heavy jobs.

For businesses and developers, the update signals a shift away from short, chat-style AI interactions toward systems that can stay productive over hours—or even days—of real work.

A model built for longer attention spans

The defining technical leap in Claude Opus 4.6 is its 1 million token context window, now available in beta. In practical terms, this allows the model to absorb and reason over massive codebases, legal documents, financial records, or research archives without losing track of critical details.

This matters because “context decay” has been one of AI’s biggest weaknesses. As conversations or tasks grow longer, most models begin to forget earlier instructions or misinterpret dependencies. Opus 4.6 shows meaningful progress here, retaining accuracy deep into extended sessions where earlier versions—and many competitors—struggled.

Equally important is how the model uses that context. Anthropic says Opus 4.6 revisits its own reasoning more carefully, catching mistakes during code review and debugging instead of compounding them. That behavior is subtle, but it’s the difference between a helpful assistant and something teams can actually rely on.

Why coding is at the center of this release

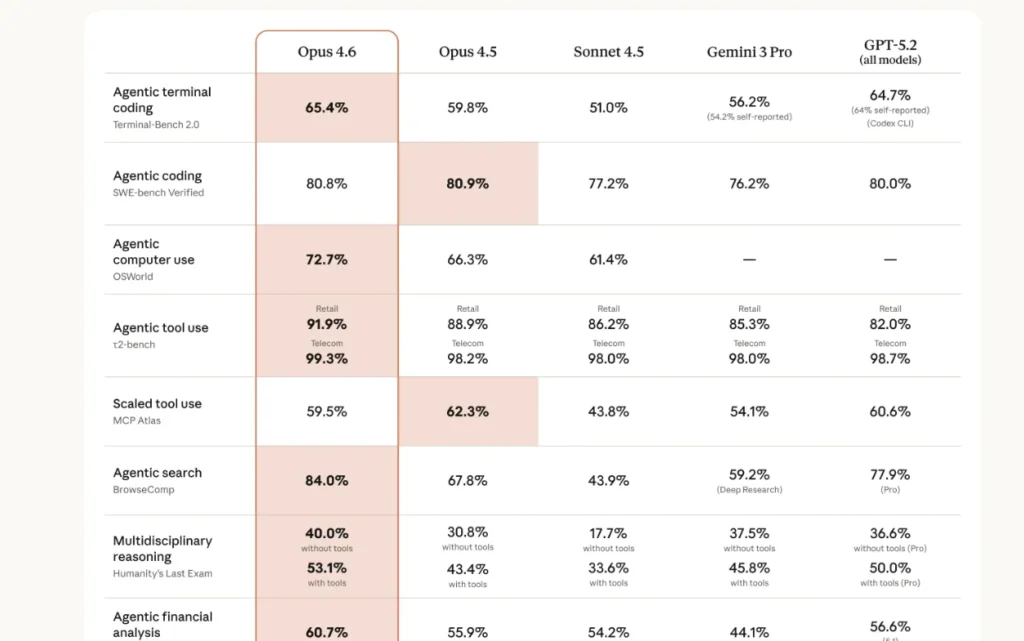

While Opus 4.6 can handle financial analysis, research, and document creation, software engineering is where the improvements are most pronounced. On Terminal-Bench 2.0, a leading evaluation for agentic coding, the model leads the field. It also topped Humanity’s Last Exam, a multidisciplinary reasoning test designed to expose brittle logic.

One comparison stands out: on GDPval-AA, which measures performance on economically valuable professional tasks, Opus 4.6 outscored OpenAI’s GPT-5.2 by roughly 144 Elo points. That margin suggests not just incremental progress, but a real competitive advantage in applied knowledge work.

Insiders will notice another detail: Anthropic kept pricing flat at $5 per million input tokens and $25 per million output tokens, even as capabilities expanded. That’s a strategic move aimed squarely at enterprise adoption.

From chatbots to autonomous coworkers

Anthropic’s broader bet is visible in how Opus 4.6 integrates with its product ecosystem. Within Cowork and Claude Code, the model can now manage multi-step workflows, coordinate with other agents, and decide when deeper reasoning is worth the cost and latency.

Developers gain new controls over how “hard” the model thinks, choosing between low, medium, high, or maximum effort. There’s also context compaction, which lets the model summarize older material on the fly to keep long-running tasks alive without hitting hard limits.

This is less about convenience and more about trust. When AI systems can plan ahead, adjust effort, and maintain coherence, they start to resemble junior teammates rather than tools that need constant supervision.

Safety without slowing down

More capable AI models often raise concerns about misuse. Anthropic is leaning heavily on its safety credentials here. According to the company’s system card, Opus 4.6 shows low rates of deceptive or misaligned behavior and fewer unnecessary refusals than earlier Claude models.

Notably, Anthropic added new cybersecurity safeguards as the model’s technical abilities increased. At the same time, the company is using Opus 4.6 defensively—helping identify and patch vulnerabilities in open-source software. That dual-use reality is becoming unavoidable in advanced AI, and Anthropic appears determined to stay ahead of it.

Why this news matters

For developers, Opus 4.6 lowers the friction of working with large, messy systems—exactly the kind that dominate real enterprises. For analysts, lawyers, and finance teams, it hints at AI that can finally follow long chains of logic without drifting.

For the broader market, this release tightens competition at the top end of AI. The gap between “consumer chatbots” and professional-grade AI systems is widening, and Opus 4.6 squarely targets the latter.

What to watch next

Over the next 6 to 24 months, expect three ripple effects. First, more companies will experiment with autonomous AI agents for internal work. Second, long-context reasoning will become table stakes, not a differentiator. Third, pricing pressure will intensify as vendors race to offer more capability without raising costs.

Claude Opus 4.6 doesn’t just raise the bar—it clarifies where the bar is headed. AI is no longer being judged by how clever it sounds in a conversation, but by how well it holds up under the weight of real work.